When I was taking undergrad real analysis, the professor, Ali Tahzibi, was teaching us about the Stone-Weierstrass theorem, and he asked my class: let where is the supremum norm. The theorem says , but can the convergence be arbitrarily slow? He offered one million bucks for the solution instantly grabbing my attention.

You can scarcely do mathematical analysis without polynomial approximations, so the Stone-Weierstrass theorem is foundational, and if it was possible to improve its rate of convergence that would be a major result. Initially, it seemed impossible to construct a function so pathological that even , and the question was asking for a lot more than that. There are upper bounds on for large classes of functions, including Hölder continuous, or those whose modulus of continuity is good enough. In particular, even famously pathological functions such as Weierstrass's example of a continuous nowhere differentiable function, or (with probability ) a Wiener process have upper bounds on .

I pondered the question for a few days, and finally found a counterexample proving that the answer is no by relating approximations

to the number of roots of a polynomial. Precisely, for any sequence

of positive real numbers converging to , there is a function

such that

for all . He encouraged me to submit it to the

American Mathematical Monthly for publication, which I did, and it was accepted a week later. I hope all my publications

throughout my career follow a similar Blitzkrieg

timeframe.

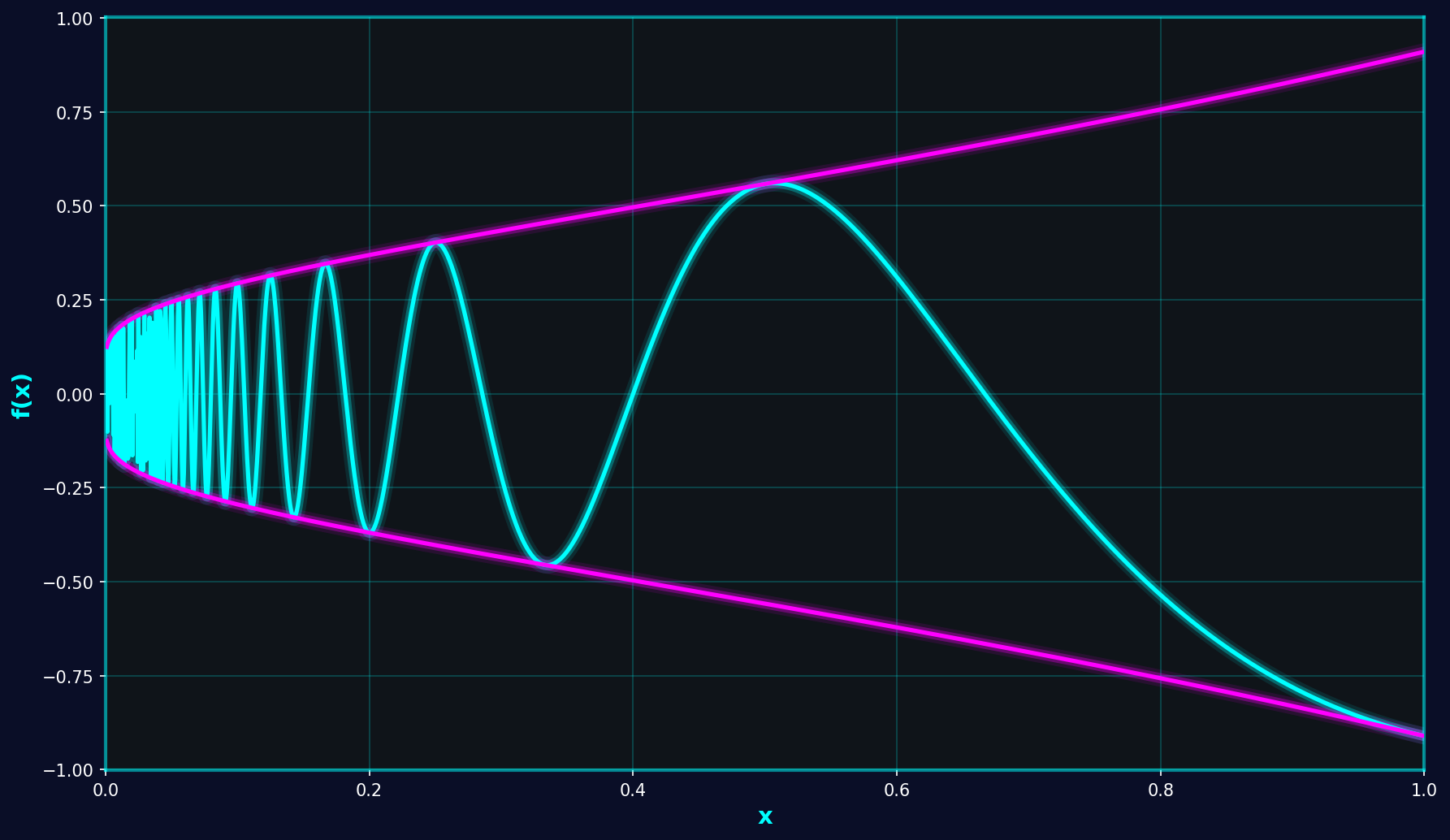

The construction of the counterexample is brutally simple. The key insight is that to force a polynomial approximant to have a high degree, you must force it to have a lot of roots. We can do this by making oscillate around , forcing a good-enough approximant to do the same. Fix a sequence of numbers decreasing to , and let be an increasing continuous function such that and Where for all , which can be achieved defining as affine by parts in the intervals .

A polynomial approximation satisfying must have a root in each of the intervals i.e. at least roots so its degree is more than . Indeed, for each positive integer , we have , and

so and have the same sign.

In the literature, there is an equivalent construction. [1] They achieve it by letting where is a Chebyschev polynomial. Using Chebyschev polynomials as a basis is very useful to determine approximation-theoretical properties but unfortunately not much else. The construction as a lacunary series further obscures the properties of f. I think my construction gives more insight into why convergence is slow.

I think this is a pretty fun result, and I'm grateful to professor Tahzibi for suggesting it.

[1] Cheney, E. W. (2000). Introduction to Approximation Theory. Providence, RI: American Mathematical Society.